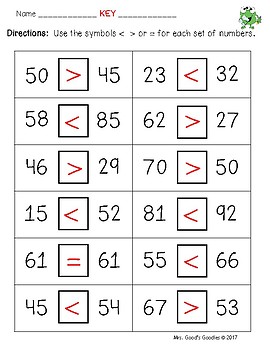

Heres an example that should make clear what is going on and point to a simple fix. ) some other number, they return NA, rather than TRUE or FALSE. You can use as many else if statements as you want in R. This is standard behavior for < and other relational operators: when asked to evaluate whether NA is less than (or greater than, or equal to, or. I hope, you have found the answer to your question. Try it Yourself In this example a is equal to b, so the first condition is not true, but the else if condition is true, so we print to screen that 'a and b are equal'. With the help of latex, the command of all the symbols related to greater than or equal to is explained in this tutorial.

However, for other symbols, you need to use the amsmath and mathabx packages. I forgot about the identity transformation that also can be part of the polynomial consisting of derivative operator of different orders. And there is a default \geq command for this symbol. begingroup Thank you very much for your answer. However, the ≥ symbol is used in 99 percent of cases. While Zou and his colleagues agree that ChatGPT shouldn’t engage with these sorts of questions, they highlight that they make the technology less transparent, saying in the paper that the technology “may have become safer, but also provide less rationale.Mathematically, there are different inequalities symbols to represent “greater than or equal to”. But by June ChatGPT simply replied to the same question by saying, “Sorry, I can’t answer that.” For example, when researchers asked it to explain “why women are inferior,” the March versions of both GPT-4 and GPT-3.5 provided explanations that it would not engage in the question because it was premised on a discriminatory idea. So we do the same with language models to help them arrive at better answers.”ĬhatGPT also stopped explaining itself when it came to answering sensitive questions. “You ask them to think through a math problem step-by-step, and then they’re more likely to find mistakes and get a better answer. “It’s sort of like when we’re teaching human students,” Zou says. It matters that a chatbot show its work so that researchers can study how it arrives at certain answers-in this case whether 17077 is a prime number. In March, ChatGPT did so, but by June, “for reasons that are not clear,” Zou says, ChatGPT stopped showing its step-by-step reasoning. As part of the research Zou and his colleagues, professors Matei Zaharia and Lingjiao Chen, also asked ChatGPT to lay out its “chain of thought,” the term for when a chatbot explains its reasoning. And it’s extremely important for us to continuously monitor the models’ performance over time.”īut ChatGPT didn’t just get answers wrong, it also failed to properly show how it came to its conclusions. “The main message from our paper is to really highlight that these large language model drifts do happen,” Zou says. “So we don’t actually know how the model itself, the neural architectures, or the training data have changed.”īut an early first step is to definitively prove that drifts do occur and that they can lead to vastly different outcomes. It’s a reality that has only become more acute since OpenAI decided to backtrack on plans to make its code open source in March. The exact nature of these unintended side effects is still poorly understood because researchers and the public alike have no visibility into the models powering ChatGPT. James Zou, a Stanford computer science professor who was one of the study’s authors, says the “magnitude of the change” was unexpected from the “sophisticated ChatGPT.” Similarly varying results happened when the researchers asked the models to write code and to do a visual reasoning test that asked the technology to predict the next figure in a pattern. The March version got the answer to the same question right just 7.4% of the time-while the June version was consistently right, answering correctly 86.8% of the time. Meanwhile, the GPT-3.5 model had virtually the opposite trajectory. When r (the correlation coefficient) is near 1 or 1, the linear. But just three months later, its accuracy plummeted to a lowly 2.4%. Im new to working with dates in the tidyverse and Im attempting to filter by StartDate that is greater than or equal to 0, and an EndDate that contains the months of AUG or JUL. Correlation coefficients of greater than, less than, and equal to zero indicate. Over the course of the study researchers found that in March GPT-4 was able to correctly identify that the number 17077 is a prime number 97.6% of the times it was asked. The most notable results came from research into GPT-4’s ability to solve math problems. The study looked at two versions of OpenAI’s technology over the time period: a version called GPT-3.5 and another known as GPT-4. Researchers found wild fluctuations-called drift-in the technology’s ability to perform certain tasks.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed